Generative AI presents enormous opportunities to transform how scientific and scholarly research is carried out. Faced with the pressure to publish, a more complex research environment to navigate, and growing administrative demands, researchers need better tools available to them. To meet these challenges, Clarivate™ is developing cutting-edge AI technology that will remove roadblocks in the research and publishing process and ultimately contribute to the betterment of research. We previously shared plans to develop and embed market-leading generative AI, including conversational discovery, into our academic solutions, and are delighted to announce that the Web of Science™ AI Research Assistant will be available for beta testing this December.

While generative AI’s potential to save researchers time and reduce workload is clear, there are concerns with employing the technology in a research setting. When generative AI fabricates scholarly references, for example, it demonstrates the potential to undermine research integrity and erode trust in scientific output without considered use. Researchers may rightly question whether they can trust the technology at this early stage in its lifecycle. Generative AI must be developed and implemented in research tools responsibly, which calls for a measured yet agile product development approach that includes input and guidance from the research community.

Partnering with the community to innovate

This is why our approach to implementing AI in the Web of Science is to partner with researchers and libraries to ensure the features we implement solve the most pertinent problems in ways that deliver trust in the technology and its application.

Current AI innovations already implemented in the Web of Science Core Collection™, the world’s only publisher-neutral, multidisciplinary citation index, were heavily guided by user feedback; enriched cited references provide a good example of this. In 2021 product teams and data scientists, guided by the Institute for Scientific Information™, enriched cited reference data with additional facets to help users understand how and why an author cited a paper.

To ensure that we were capturing and presenting the most useful information for researchers, we consulted institutional development partners and carried out extensive user testing early in the process. To ensure accuracy and correctness, we collaborated with these partners to curate reliable, trustworthy data from the ground up. The outcome is a suite of features that help users conduct more targeted searches, locate must-read papers and delve into the ‘why’ behind citation counts.

Delivering useful information that improves the research process

We develop our products with the goal of serving up helpful insights along the user journey. Recommendations, enhanced data points and research indicators assist research stakeholders in determining their next step. Technology cannot make decisions for researchers, publishers, or organizational leaders, but it can greatly reduce manual effort spent on gathering and synthesizing information for further review.

To save time gathering information, AI is frequently leveraged to simplify the search experience for users. AI-enabled topic and keyword suggestions in the Web of Science help users quickly and efficiently narrow their searches and improve the relevancy of their results. Additional AI search improvements on the roadmap will help Web of Science users improve their queries with minimal effort, including semantic search, typeaheads, autocorrect, and personalized experiences.

When it comes to synthesizing information, literature reviews are an area with high potential for AI implementation. Literature reviews are valuable to the community because they provide a guide to a discipline or research topic—a helpful input for determining how to take research forward. There are many ways to summarize the research landscape on a topic with no single method being correct. Employing generative AI to automate literature reviews means that these helpful inputs into the research process can be delivered on a much larger scale.

While AI is well-positioned to improve research, researchers must feel confident in AI-delivered insights to consider them useful. The utility of generative AI responses ultimately depends on the trustworthiness of the data source used to generate them. Relying on the carefully curated, meticulously indexed Web of Science Core Collection dataset mitigates some risks posed by generative AI. Our selection and indexing policies ensure that users are discovering research from trusted, reliable sources evaluated by our independent, in-house editorial experts. Our consistent, accurate and complete indexing has created a thorough record of over a century of research.

Supporting researchers with smarter tools

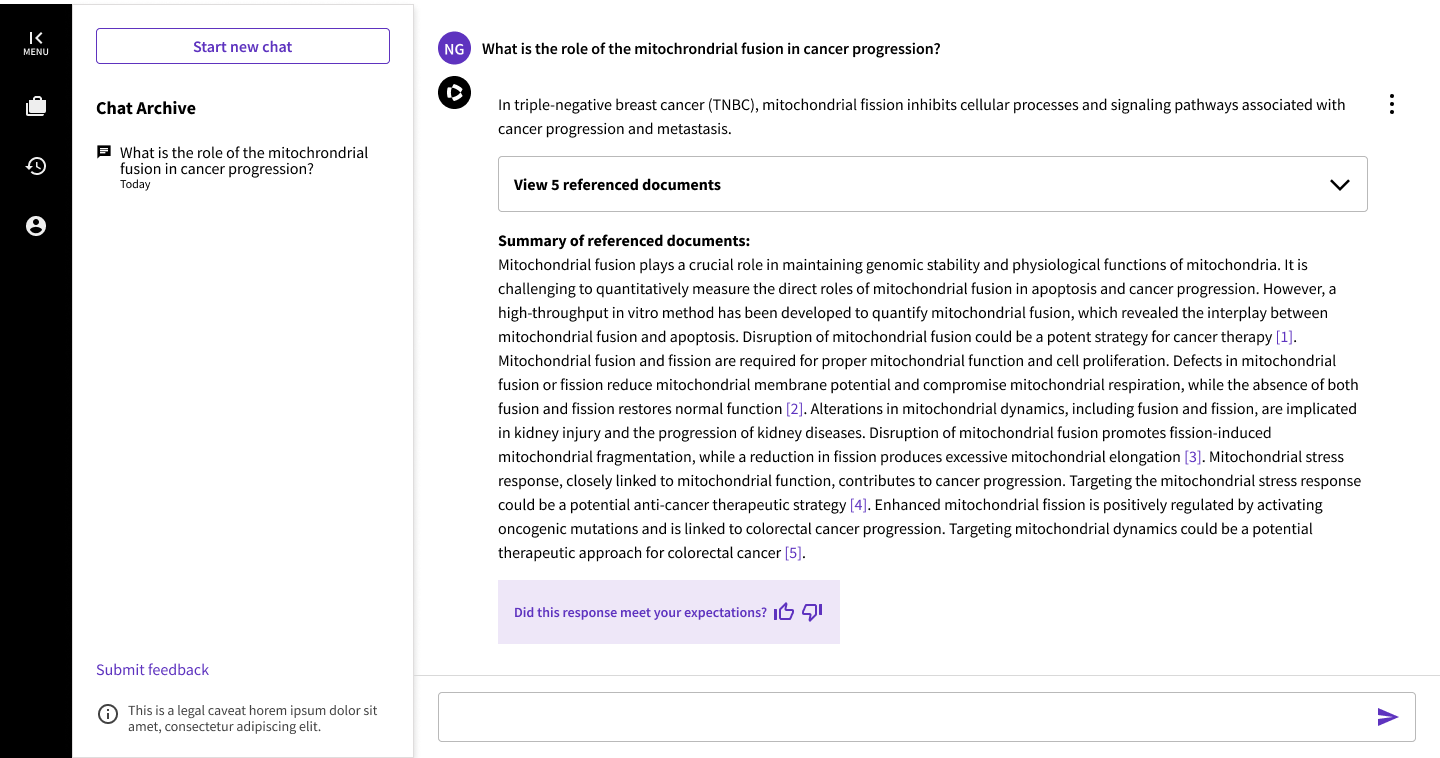

We are implementing AI in the Web of Science with a focus on automating tasks that are labor intensive for researchers but do not require expertise, such as extracting, restructuring, summarizing, and presenting requested information from our meticulously structured metadata. The next step in our AI journey is the Web of Science AI Research Assistant, a generative-AI-powered tool that opens new modes of utility for users. Researchers can ask questions and more easily uncover the right answers from Web of Science data, with the AI Research Assistant cutting through the complexity of data and making connections between articles, as well as helping them to browse concise summaries of articles and results sets. With this new conversational capability, the Web of Science user will be more agile than ever, freeing up valuable time for activities that add new knowledge to the ecosystem.

We are consulting with our university development partners to ensure that we maximize the strength and suitability of generative AI for researchers. We are focused on ensuring the quality of the Web of Science AI Research Assistant before releasing it widely.

“We’re happy to be part of the Web of Science Development Partner Program, it will allow us to know deeply the new AI Research Assistant and how it can help our researchers.”

Combining our high-quality, reliable data and expertise, we partner with our customers to help them navigate the opportunities and risks that generative AI poses in a way that ensures confidence and value. Our collaboration with the research community is well underway to further develop this new capability. Let us know if you want to join the conversation.

Interested in becoming a beta tester? Let us know wosdevpartners@clarivate.com.